ISO/IEC 42005: Systems Engineering Standards

This standard provides guidance for organizations conducting AI system impact assessments.

Discover the comprehensive ISO/IEC 42005 framework for AI system impact assessment, guiding businesses in evaluating potential effects on individuals and society. This standard bridges AI innovation with regulatory compliance, offering structured processes for responsible deployment and governance across various organizational sizes.

ISO/IEC 42005 provides a comprehensive framework for AI system impact assessment that helps organizations navigate the complex intersection of technological innovation and social responsibility. By implementing structured assessment processes aligned with this international standard, businesses can mitigate risks, enhance trust, and maximize the benefits of their AI investments.

As AI continues to transform industries and societies, responsible governance becomes increasingly important. ISO/IEC 42005 offers a valuable roadmap for organizations committed to developing and deploying AI systems that create value while minimizing potential harms. Through thoughtful implementation of this standard, businesses can contribute to a future where AI technologies serve human needs and values while respecting fundamental rights and ethical principles.

The Definitive Framework for AI System Impact Assessment

Artificial intelligence systems are becoming increasingly integrated into critical aspects of business operations and everyday life. With this integration comes the responsibility to understand and mitigate potential impacts on individuals, communities, and society. ISO/IEC 42005, published in April 2025, establishes a comprehensive framework for AI system impact assessment practices, bridging the communication gap between evolving AI technologies and regulatory requirements.

Understanding ISO/IEC 42005

ISO/IEC 42005 provides organizations with structured guidance for conducting thorough impact assessments of AI systems. This international standard is designed to evaluate both intended and unintended consequences of AI implementation, with a focus on potential effects on individuals and societies. As a crucial candidate for implementation, it offers a detailed framework for assessing artificial intelligence impact, ensuring organizations effectively manage AI systems.

According to the ISO official documentation, the standard "provides guidance for organizations performing AI system impact assessments for individuals and societies that can be affected by an AI system and its intended and foreseeable applications." It includes considerations for when and how to perform such assessments throughout the AI system lifecycle, along with documentation guidelines.

What makes ISO/IEC 42005 particularly valuable is its applicability across organizations of all sizes and types. Whether you're a startup developing innovative AI solutions or an established enterprise integrating AI into existing processes, this framework offers a structured approach to responsible AI deployment.

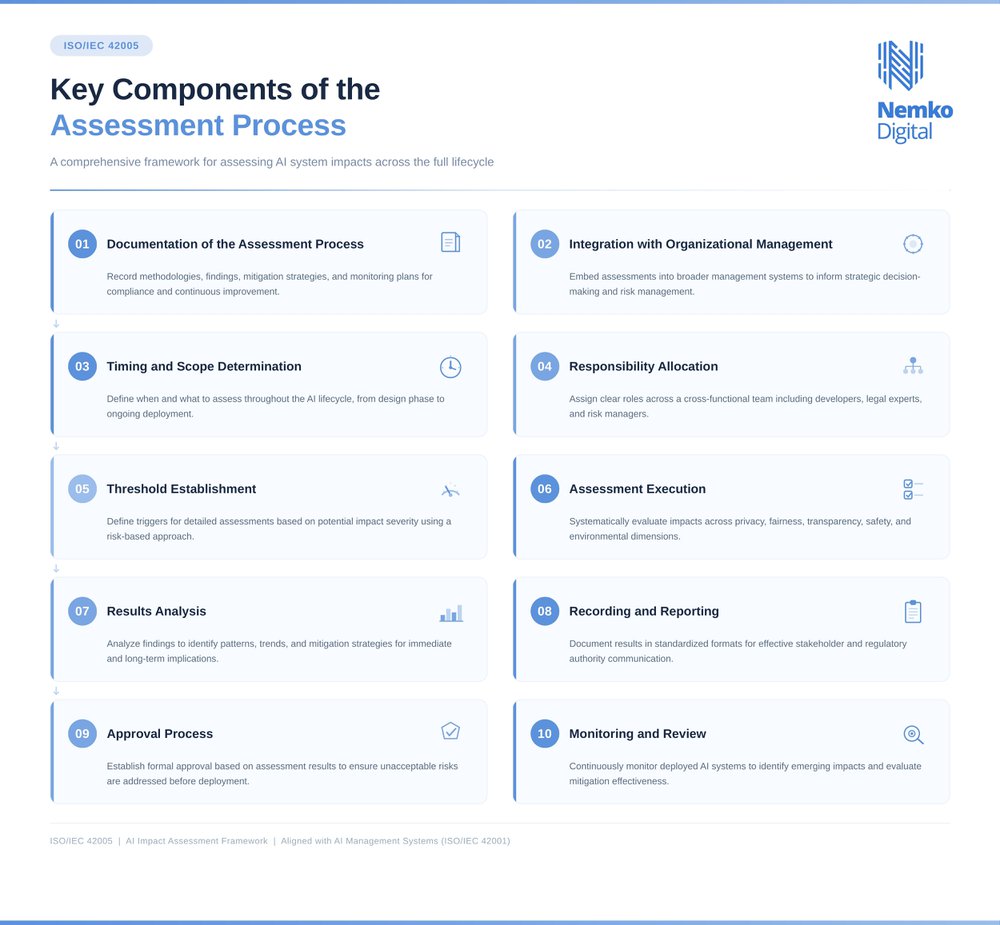

Key Components of the ISO/IEC 42005 Assessment Process

1. Documentation of the Assessment Process

Comprehensive documentation forms the foundation of effective impact assessment. This includes recording methodologies, findings, mitigation strategies, and ongoing monitoring plans. Proper documentation not only supports compliance efforts but also provides valuable insights for continuous improvement in AI system impact assessment processes.

2. Integration with Organizational Management

The standard emphasizes that impact assessments should not exist in isolation but should be integrated into broader organizational management systems. This integration ensures that assessment findings inform strategic decision-making and risk management processes, influencing the overall AI management system.

3. Timing and Scope Determination

Organizations must determine when assessments should be conducted throughout the AI lifecycle and what aspects of the system should be evaluated. Early assessment during the design phase can prevent costly modifications later, while ongoing assessments during deployment help identify emerging issues.

4. Responsibility Allocation

Clear assignment of roles and responsibilities is essential for effective impact assessment. The standard recommends establishing a cross-functional team, including AI developers, legal experts, risk managers, and representatives from potentially affected stakeholder groups, to manage artificial intelligence management systems effectively.

5. Threshold Establishment

Organizations should define thresholds that trigger more detailed assessments based on potential impact severity. This risk-based approach allows for proportionate allocation of assessment resources and is an essential part of AI risk management.

6. Assessment Execution

The actual assessment involves systematic evaluation of potential impacts across various dimensions, including privacy, fairness, transparency, safety, and environmental considerations. This evaluation should consider both direct and indirect effects of the AI system.

7. Results Analysis

Assessment findings must be thoroughly analyzed to identify patterns, trends, and potential mitigation strategies. This analysis should consider both immediate impacts and longer-term implications, contributing to a certifiable AI management system framework.

8. Recording and Reporting

Results should be documented in a standardized format that facilitates communication with stakeholders and regulatory authorities. The standard provides guidance on effective reporting structures.

9. Approval Process

Organizations should establish a formal approval process for AI systems based on impact assessment results. This process ensures that systems with unacceptable risks are modified or discontinued before deployment.

10. Monitoring and Review

Impact assessment is not a one-time activity but an ongoing process. The standard recommends continuous monitoring of deployed AI systems to identify emerging impacts and evaluate the effectiveness of mitigation measures.

Implementation Strategies for ISO/IEC 42005

Organizations looking to implement ISO/IEC 42005 can benefit from a structured approach that aligns with their existing governance frameworks. According to Don Liyanage's technical memorandum on ISO/IEC 42005:2025, businesses should consider these implementation strategies:

Conduct Comprehensive AI System Impact Assessments

Begin by identifying potential benefits and risks associated with your AI systems. This assessment should consider impacts on individuals, groups, and broader societal structures. Organizations should leverage AI governance tooling and technologies to streamline this process and ensure consistency across different AI implementations.

Develop a Robust Governance Framework

Establish a governance structure that clearly defines roles and responsibilities throughout the AI lifecycle. This framework should promote transparency, accountability, and ethical considerations in AI development and deployment. Organizations with existing AI management systems can integrate ISO/IEC 42005 requirements into their current frameworks, aligning with the AI assurance ecosystem.

Provide Comprehensive Employee Training

Ensure that employees understand the potential impacts of AI systems and their role in the assessment process. Training should cover key considerations like accountability, transparency, bias mitigation, privacy protection, and safety measures. This knowledge empowers teams to identify and address potential issues proactively, enhancing knowledge representation practices.

Stay Informed About Regulatory Developments

The regulatory landscape for AI is evolving rapidly. Organizations should maintain awareness of emerging regulations and standards that may affect their AI implementations. This vigilance helps ensure ongoing compliance and reduces the risk of regulatory penalties.

Prepare for Certification

As ISO/IEC 42005 becomes more widely adopted, certification against the standard may become a competitive advantage or even a requirement in certain sectors. Preparing for potential certification ensures that assessment processes are well-documented and aligned with the standard's requirements, thus adhering to the best practices outlined in the global framework for AI management.

The Business Case for ISO/IEC 42005 Implementation

Implementing ISO/IEC 42005 offers significant business benefits beyond regulatory compliance. Organizations that adopt a structured approach to AI impact assessment can:

- Build Trust with Stakeholders: Demonstrating a commitment to responsible AI practices enhances reputation and builds trust with customers, partners, and regulators.

- Reduce Legal and Reputational Risks: Proactive identification and mitigation of potential negative impacts reduces the likelihood of legal challenges and reputational damage.

- Improve AI System Quality: Systematic assessment often identifies opportunities for improvement in AI system design and implementation, leading to more robust and effective solutions.

- Enhance Decision-Making: Impact assessments provide valuable insights that inform strategic decisions about AI investment and deployment.

- Gain Competitive Advantage: As AI ethics and responsibility become increasingly important to consumers and business partners, organizations with established assessment practices may gain a competitive edge.

Integration with Other AI Standards and Global Regulations

ISO/IEC 42005 is designed to work seamlessly alongside other standards within the ISO/IEC AI ecosystem, enabling organizations to build a unified and efficient approach to AI governance. Its impact assessment processes integrate naturally with ISO/IEC 42001, which defines requirements for AI management systems, and with ISO/IEC 23894, which focuses on AI risk management. By aligning these frameworks, organizations can streamline their governance activities, strengthen risk mitigation, and ensure consistent oversight across the AI lifecycle.

This integrated approach is increasingly important as governments worldwide introduce new regulatory frameworks for AI. ISO/IEC 42005 aligns closely with regulations such as the EU AI Act, the most comprehensive AI regulation globally.

Organizations implementing ISO/IEC 42005 gain a significant advantage in navigating the complex landscape of global AI regulations. By following the standard's structured approach to impact assessment, businesses can more easily demonstrate compliance with various regulatory requirements while maintaining a consistent internal framework for AI governance.

Looking Forward: The Future of AI Impact Assessment

As AI technologies continue to evolve, impact assessment practices will need to adapt to address new challenges and opportunities. The publication of ISO/IEC 42005 represents a significant milestone in the development of standardized approaches to AI governance, but it is just one step in an ongoing journey.

Organizations that embrace the principles and practices outlined in ISO/IEC 42005 position themselves not only for compliance with current requirements but also for adaptability to future developments in AI regulation and best practices. By establishing robust assessment processes today, these organizations lay the groundwork for responsible AI innovation tomorrow.

Nemko Digital supports organizations throughout this journey by providing expertise, guidance, and practical tools to implement ISO/IEC 42005 effectively, helping them build trustworthy, compliant, and future‑ready AI systems.

Our AI Trust Services

Nemko Digital guides organizations through their AI governance and regulatory compliance, ensuring that AI is designed, built and deployed in a way that inspires trust and conforms to international law. The risks of AI are real and well known. We are here to help you turn those risks into opportunities.

.webp)

.webp)