AI Index 2026 Report Shows Capability Gains Outpacing Control

The annual report says frontier models are now matching or exceeding human performance on selected benchmarks, including PhD-level science questions, multimodal reasoning, competition mathematics, and cybersecurity tasks. At the same time, the report shows uneven progress: some models still struggle with time, multi-step planning, financial analysis, and coherent video generation. That mix of progress and limitation matters for enterprises because it shows where AI capabilities are ready for deployment and where human review remains essential.

The investment picture is equally striking. Stanford reports that global corporate AI investment reached $581.7 billion in 2025, while private investment rose to $344.7 billion. The report also notes that AI adoption is spreading at historic speed, with generative AI reaching 53% population adoption within three years. For business leaders, the message is clear: AI is no longer an experimental tool; it is becoming an operational dependency—not experimentation—and it is increasingly embedded in conversational AI experiences delivered through a chat interface, as well as emerging AI agents.

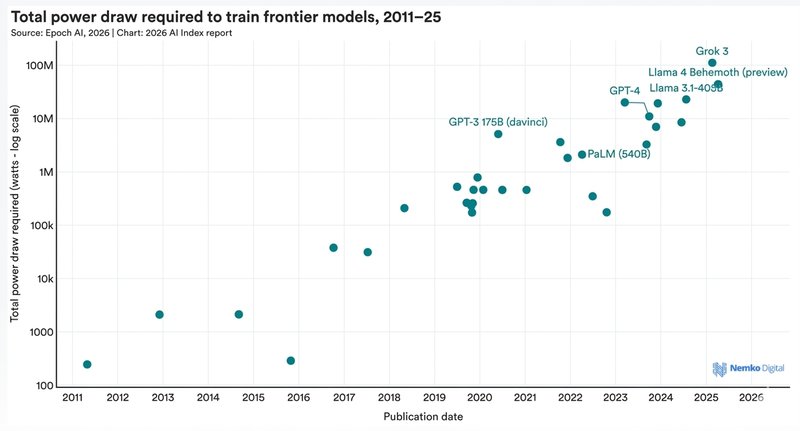

Energy, Transparency and AI Compliance Move to the Fore

The report’s environmental findings are among its most urgent. Stanford says Grok 4’s estimated training emissions reached 72,816 tons of CO2 equivalent, while AI data center power capacity rose to 29.6 GW. That concern aligns with the International Energy Agency’s latest analysis, which says electricity use from data centers is already significant and is set to keep rising as AI demand grows.

Transparency is also narrowing as model capability rises. Stanford’s Foundation Model Transparency Index fell to 40 points from 58, with the most capable models often disclosing the least information about training data, compute, risks, and usage policies. For organizations that must prove responsible use, this creates a clear governance gap. Nemko Digital’s AI Governance Services and AI Regulatory Compliance pages both reflect that need for structured oversight, risk control, and regulatory readiness—especially as AI distribution expands across vendors, platforms, and internal teams.

Workforce Effects Are Starting Earlier

The report also points to an entry-level squeeze. Stanford says employment among software developers aged 22–25 has fallen nearly 20% since 2024, even as older workers in the same field have held steady or grown. The International Labour Organization’s 2025 update on generative AI and jobs similarly warns that exposure is not evenly distributed and that some occupations face higher transformation pressure than others.

That finding is especially relevant for organizations that are already redesigning AI hiring, training, and workflow systems. In labor-market demand terms, the AI Index analysis of overall job postings and US job postings highlights how total AI skills are being requested differently across total job markets—often showing a higher concentration in certain hubs, but also meaningful growth outside the traditional high-tech fringe into smaller markets. In practice, that means tracking not just national total counts, but also where the highest share of AI job postings is appearing, how AI skills relative to non-AI roles are shifting, and how fast new requirements (like AI agents and an agentic AI skill cluster) are entering mainstream skills data. Nemko Digital’s AI Management Systems and ISO/IEC 42001 point to the same operational need: governance structures that can support AI use, accountability, monitoring, and continuous improvement.

What Organizations Should Take From the Report

Stanford’s broader message is not that AI is slowing down. It is that AI is maturing into a system-level issue that touches infrastructure, talent, regulation, and trust all at once. For enterprises and public-sector bodies, that means AI strategy now needs the same discipline as cybersecurity, quality management, and compliance—and it needs to be grounded in human-centered artificial intelligence principles, not just performance metrics.

Operationally, this is where the full Stanford AI Index becomes useful as a recurring reference point: it helps AI experts and non-specialists alike gauge momentum across AI capabilities, adoption, and the workforce, including the shift toward a new AI skill cluster that blends automation, orchestration, and agentic workflows. Nemko Digital’s EU AI Regulations page reflects that shift, framing AI readiness as a matter of regulatory alignment, governance, and verifiable control—and treating AI as “work” that must be managed like any other critical system, not as a side project.

.webp)

.webp)